| Seeing AI. Photo by Microsoft |

Microsoft has expanded its existing efforts to improve life for the visually impaired by developing an AI system capable of automatically generating high-quality image captions — and, in ‘many cases,’ the company says its AI outperforms humans. This type of technology may one day be used to, among other things, automatically caption images shared online to aid those who are dependent on computer vision and text readers.

Computer vision plays an increasingly important role in modern systems; at its core, this technology enables a machine to view, interpret and ultimately comprehend the visual world around it. Computer vision is a key aspect of autonomous vehicles, and it has found use cases in everything from identifying the subjects or contents of photos for rapid sorting and organization to more technical use cases like medical imaging.

In a newly published study [PDF], Microsoft Researchers have detailed the development of an AI system that can generate high-quality image captions called VIsual VOcabularly (VIVO), which is a pre-training model that learns a ‘visual vocabulary’ using a dataset of paired image-tag data. The result is an AI system that is able to create captions describing objects in images, including where the objects are located within the visual scene.

Test results found that at least in certain cases, the AI system offers new state-of-the-art outcomes while also exceeding the capabilities of humans tasked with captioning images. In describing their system, the researchers state in the newly published study:

VIVO pre-training aims to learn a joint representation of visual and text input. We feed to a multi-layer Transformer model an input consisting of image region features and a paired image-tag set. We then randomly mask one or more tags, and ask the model to predict these masked tags conditioned on the image region features and the other tags … Extensive experiments show that VIVO pre-training significantly improves the captioning performance on NOC. In addition, our model can precisely align the object mentions in a generated caption with the regions in the corresponding image.

Microsoft notes alternative text captions for images are an important accessibility feature that is too often lacking on social media and websites. With these captions, individuals who suffer from vision impairments can use dictation technology to read the captions, giving them insight into the image that they may otherwise be unable to see.

|

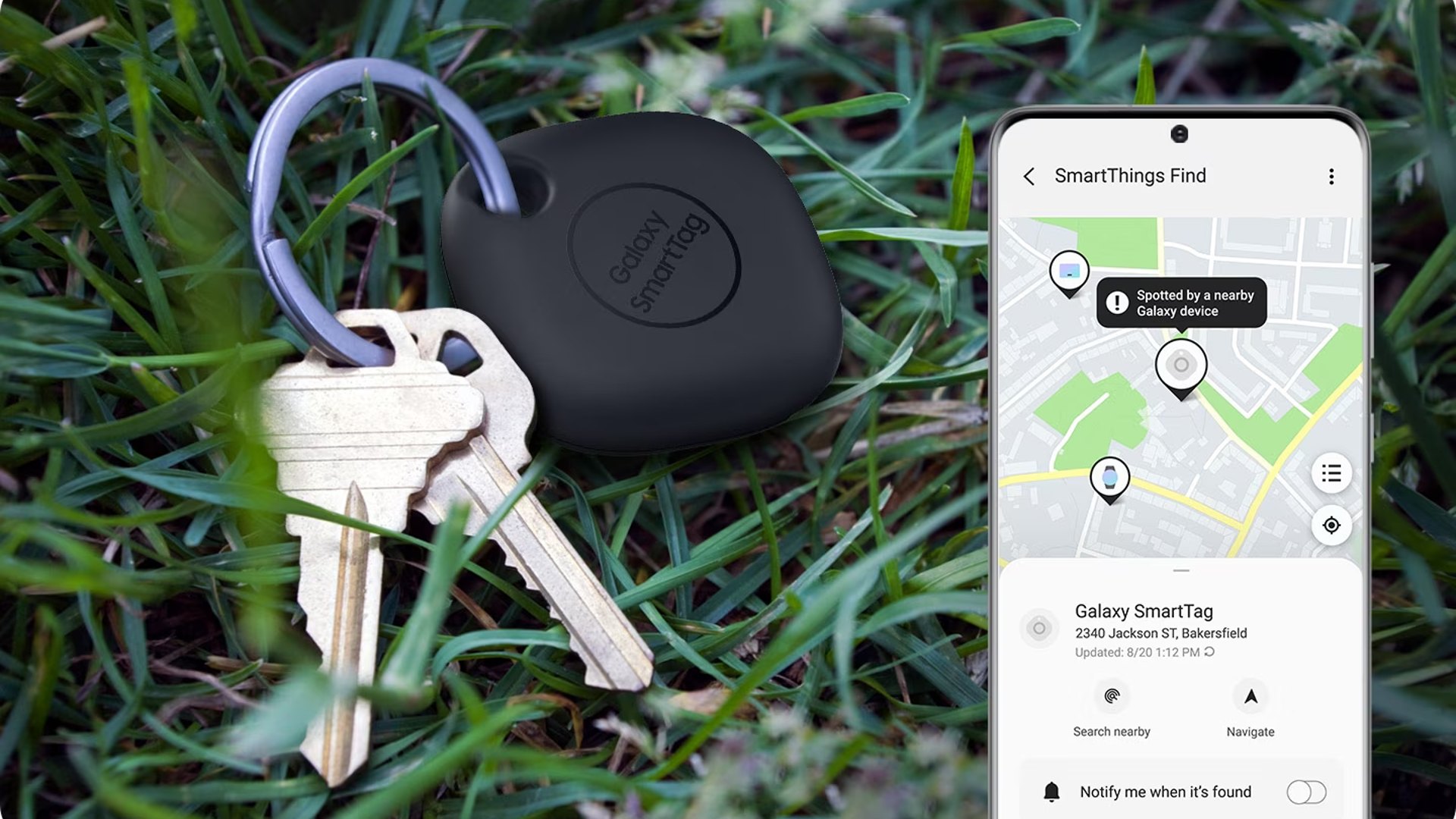

The company previously introduced a computer vision-based product described specifically for the blind called Seeing AI, which is a camera app that audibly describes physical objects, reads printed text and currency, recognizes and reports colors and other similar things. The Seeing AI app can also read image captions — assuming captions were included with the image, of course.

Microsoft AI platform group software engineering manager Saqib Shaikh explained:

‘Ideally, everyone would include alt text for all images in documents, on the web, in social media – as this enables people who are blind to access the content and participate in the conversation. But, alas, people don’t. So, there are several apps that use image captioning as a way to fill in alt text when it’s missing.’

That’s where the expanded use of artificial intelligence comes in. Microsoft has announced plans to ship the technology to the market and make it available to consumers through a variety of its products in the near future. The new AI model is already available to Azure Cognitive Services Computer Vision customers, for example, and the company will soon add it to some of its consumer products, including Seeing AI, Word and Outlook for macOS and Windows, as well as PowerPoint for Windows, macOS and web users.